Updating a Risc-V system to its latest Yocto release

Having a RZ/Five based Risc-V board lying around in my office made me wonder what its latest supported GNU/Linux software would be. So I dusted off the old SMARC based EVK and connected it up.

Sure enough it booted from its internal eMMC as no SD card was plugged in. With the board working, it should not be too difficult to get the software to the latest version. The route I chose was not without problems, but instead of glossing over them, I decided to write it down completely. May be others can learn from my errors.

Read more… (10 min remaining to read)

MicroPython on Zephyr - MCXN947

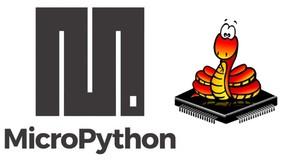

In my recent post on MicroPython I looked at the Zephyr port of MicroPython on the i.MXRT1060 EVK. As that board has an SD card connector populated, I was able to use an VFAT SD card for storing Python programs. For the FRDM-MCXN947 the situation is different. Although the MCXN947 micro-controller features an SD controller, the Freedom board is lacking the actual SD card connector as it is not populated, probably to save money. As I am a software guy, mounting this SMD connector is more difficult than looking for software alternatives, so let's find out if we can use the internal flash or the external QSPI as a file system instead.

Read more… (7 min remaining to read)

MicroPython on Zephyr - i.MXRT1060

Not having looked at the MicroPython "port" for the Zephyr operating system, I decided to check the current state of it. Having an MIMXRT1060-EVK on my desk, I decided to try it on this platform.

Read more… (10 min remaining to read)

Transferring Files Over TCP With Bash

There are times when I have Ethernet working on an embedded GNU/Linux board, but no tool available to actually transfer files. Latest example is the Yocto build for an i.MX evaluation board from NXP that I just built. The system lives on the SD card, so adding a package would mean to reconnect the SD card to my development machine, copy the file(s) and put it back into the board. And I am just too lazy for that. The EVK has an IP address on my local network, so there must be an easy way to transfer a file with standard tools, right?

Read more… (5 min remaining to read)

Zephyr on NXPs MCX-N

First announced in 2022, I finally have an MCX-N evalboard fron NXP on my table. The FRDM-MCXN947 eval board has a nice form factor and so I am eager to see if I can Zephyr to run on that board. The Zephyr documentation for the eval board seems to indicate that this should be easy to do. Let's find out.

Read more… (11 min remaining to read)

Scrub Your Disks Periodically!

The smartmontools subsystem of my Debian GNU/Linux system started

sending me mails that one of my hard disks was requiring attention.

The mail warned that 2 Offline uncorrectable sectors were detected

on the drive. So that's when I decided it is time for a maintenance

interrupt to look after the "mildly sick" disk.

Read more… (25 min remaining to read)

A Variety Of Screenshot Tools

As I learned recently on Mastodon, there are multiple candidates for

screenshot programs for GNU/Linux systems. Until now, I was pretty

happy with GNOMEs built-in gnome-screenshot tool, but I decided to

take a look at the other contestants and see what the differences are.

Read more… (2 min remaining to read)

Hardware Independent Accelerated Video Processing in Linux

Thinking about the significant amount of disk space that movies are using, I decided to revisit evaluating the question of transcoding them for archival purposes. I was also interested to see how much of the processing of movie files can be done in dedicated hardware instead of hogging the main CPU. Using an AMD Ryzen-2400G based desktop machine I decided to find out if there is dedicated hardware that I can use from the standard GNU/Linux applications for movies. In hindsight, I would never have expected to spend so much time on this post, but then again I now have a better understanding of many concepts in this area. Hopefully you can also profit, my dear reader.

Read more… (27 min remaining to read)

Using Tags in GThumb

I guess most people have their own way of organizing lots of digital images efficiently. Most of them probably use databases for organization even though there are standardized ways to put metadata into the image files themselves. This blog post will detail my approach based on manipulating only the image files themselves rather than putting a database "on top", or other abstractions external to the files themselves.

The GThumb application from the GNOME Project is my preferred tool to organize digital images. Up until I wanted to work only on subsets of the original images, the standard functionality was good enough for my very amateurish needs. In the previous years we used to manually copy individual files into separate directories for different further processing. Such a procedure leaves a lot of duplicate files behind and does not record the selection process in the original files themselves. After cleaning up the left behinds from those previous years I set out to establish a better procedure this time. The aim was to enable an easy process to select pictures relevant for different groups of persons all the while recording the process in the original image files. The tagged files are then collected with a short script into individual directories for further processing, e.g. for creating a picture book out of them.

Read more… (9 min remaining to read)

VM Snapshots - I Love Them!

A while ago, as VirtualBox was loosing its attractiveness due to license changes, I converted my virtual machines to KVM ones managed by virt-manager. One of the features that I just love is the capability to take snapshots of the hard disks. As I do not know of an easy way to do this from the GUI, I simply use virsh which is part of the libvirt core distribution. Before trying something "dangerous", just take a snapshot. If the experiment was not successful, then it is just a simple command to undo all the latest changes. I learned to love this feature lately as I tried crazy stuff like converting a VMs hard disc from MBR to GPT partitioning and when I ended up in an unbootable system, I could simply revert to the previous snapshot. Having this feature available empowered me to try things that may break the system.

This short blog post is just intended to show with real transcripts how this works from the command line.

Read more… (2 min remaining to read)